We can assume that at least some of those aren't holding customer data in the conventional sense? They're caches or metadata or boot devices? That's a bit of a change from their spinning drive data which is essentially 100% customer data.Īgain-probably still the best data we can get, and seeing the assumption about the bathtub curve is basically true for SSDs also is really helpful. Unless they're offering speed tiers, SSDs don't make sense for raw data storage in a price-sensitive way. Beyond that, spinning drives are where the $$/GB is. This coincidentally just happens to bisect a segment of drives that average consumers are interested in, if Backblaze only used enterprise-class drives, far fewer people would pay attention to their statistics.įrom a storage server perspective- if I were emulating a backblaze pod (which I get pretty close to in my day job) I'd definitely want my boot device on a fast SSD (so those Dell BOSS cards obviously make sense there) and there's a strong case to be made for storing special data/metadata on SSDs for something like ZFS.

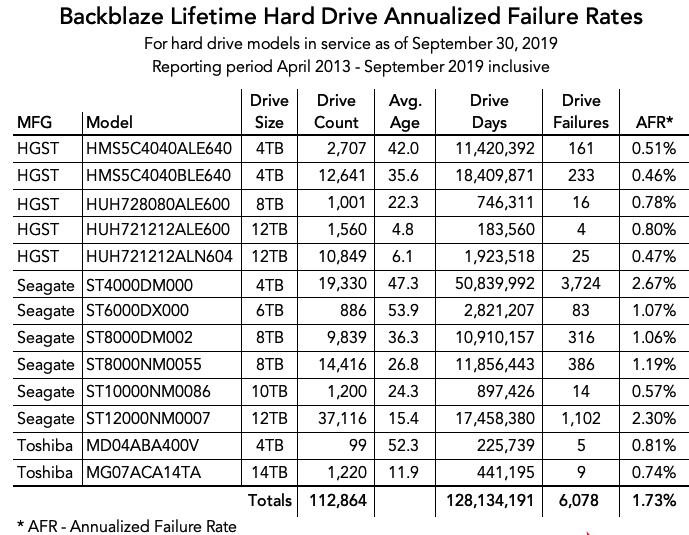

Hence the reason why they've had large installed bases of drives that otherwise seem to fail often. They've said it in previous reports - even if a particular series of drive has higher-than-normal failure rates, if the price is right, they'll keep using them because it's still cheaper that way. The nature of their business simply doesn't warrant SSD performance, so unless/until the price per TB of SSDs meets or exceeds that of mechanical drives, they aren't going to be incentivized to adopt them at scale.Īnd they're not intentionally "punishing" commodity drives, that's simply where the best value for them comes. Their goal is to store as much data as cheaply as possible, and SAS SSDs are the antithesis of that.

That's very different from skimming drive/SMART data and seeing that a drive is starting to fail and being proactive about replacing it.Ĭlick to expand.Remember that Backblaze's priority is their business of storing data - compiling drive statistics is just something they do as part of that. Outright controller failures are less common now, but they're terrifying zero-warning events that are generally unrecoverable. Considering they also have a bunch of BOSS arrays in the data, that sounds like they're boot drives or have some other high speed, high reliability component to the use of at least some of the drives.Īlso since I normally follow the HDD stats more closely, I'm kind of annoyed that they don't separate out the two kinds of SSD failures- The drive's endurance is exceeded and the drive is out of spare sectors/has gone into read-only mode from old age vs. If you really need speed, that's a reasonable compromise over the highest density HDDs. The <1TB capacities aren't honestly that expensive and you can go pretty big- ~6-7TB for under 2 grand. I know they're all about punishing cheap commodity drives, but I'm kind of surprised they didn't use say some 12 Gbit SAS SSDs. That's anecdotal but would be really helpful and interesting to know. Exactly how long did it live? Was it idle for some of the time? Did it die by throwing errors or did it just have a controller failure and poof dead. The presentation on that one is really confusing. I know that's not how statistics works but that poor single 250 GB class Seagate that crapped the bed after 4 months looks really bad in their data. $/GB is important, but they have to be getting some sweetheart deals on the ~250 GB class for that to make a lot of financial sense. I'm honestly shocked they're not just using commodity drives, they're using what are generally among the lowest end commodity drives.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed